Antarctic biodiversity

Mapping Antarctic moss and lichen with hyperspectral drones

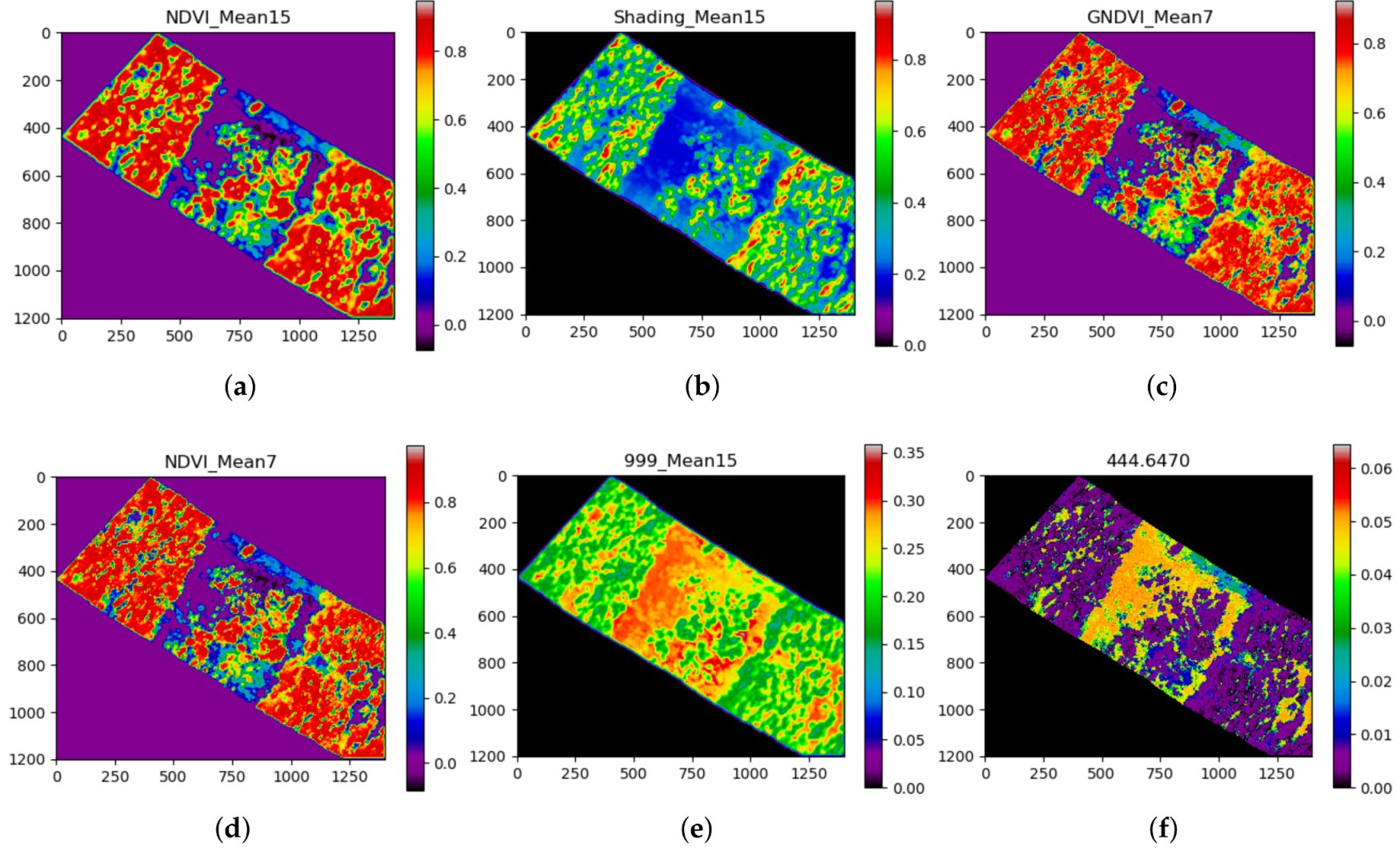

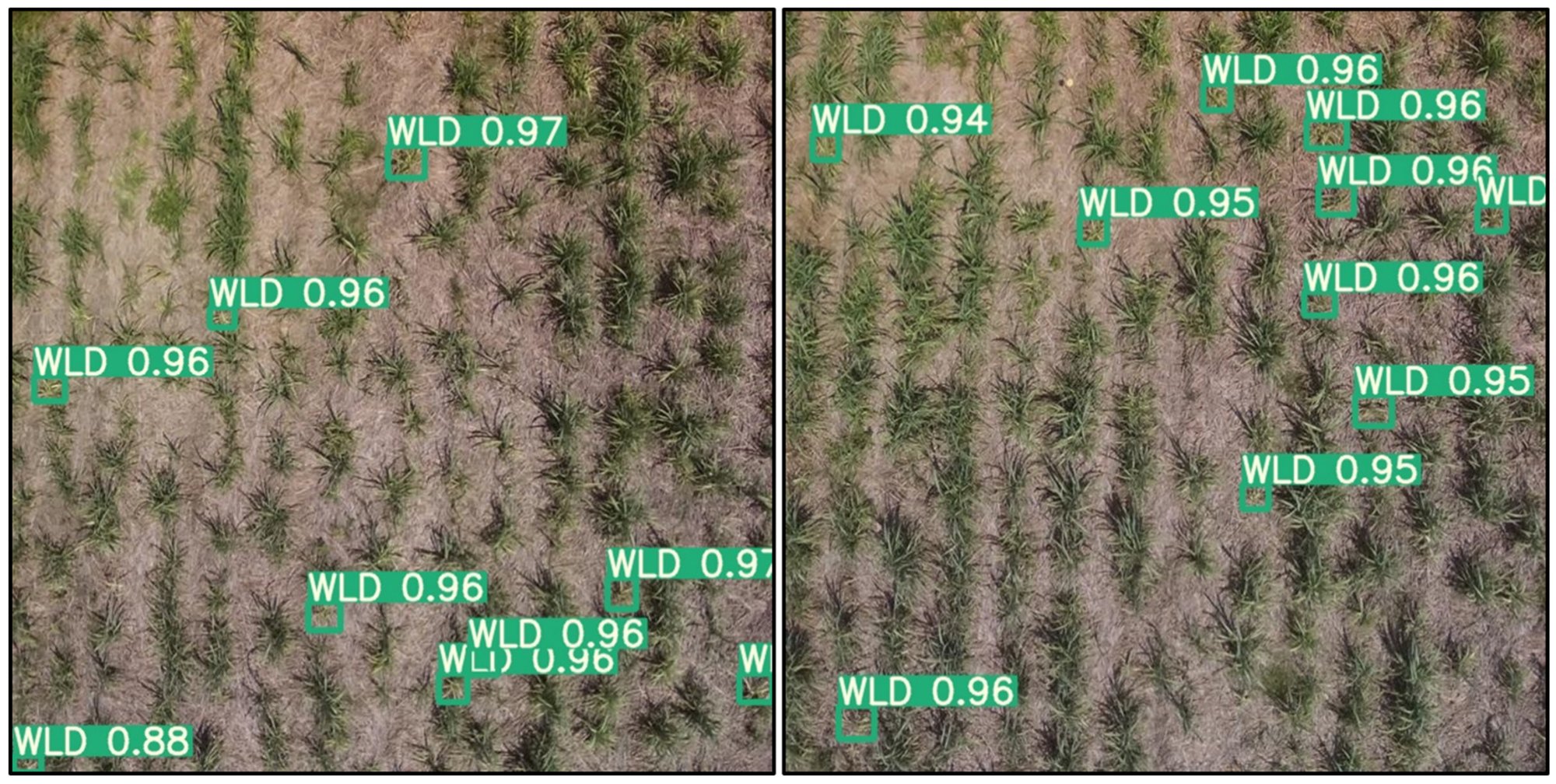

I lead UAV-based vegetation mapping at the Antarctic Specially Protected Area 135 (Robinson Ridge) and at Bunger Hills, combining hyperspectral and multispectral imaging with deep learning to classify moss and lichen at sub-centimetre resolution.

The work feeds directly into long-term conservation baselines for the continent, and runs as part of Securing Antarctica's Environmental Future (SAEF). Field campaigns to date have validated the workflow against ground spectra and quadrat surveys across multiple seasons.

Key outputs

Collaborators: QUT Centre for Robotics, SAEF, University of Wollongong, Australian Antarctic Division